David Watkins-Valls, Jacob Varley, and Peter Allen

Columbia Robotics Lab

Abstract

This work provides an architecture that incorporates depth and tactile information to create rich and accurate 3D models useful for robotic manipulation tasks. This is accomplished through the use of a 3D convolutional neural network (CNN). Offline, the network is provided with both depth and tactile information and trained to predict the object’s geometry, thus filling in regions of occlusion. At runtime, the network is provided a partial view of an object and tactile information is acquired to augment the captured depth information. The network can then reason about the object’s geometry by utilizing both the collected tactile and depth information. We demonstrate that even small amounts of additional tactile information can be incredibly helpful in reasoning about object geometry. This is particularly true when information from depth alone fails to produce an accurate geometric prediction. Our method is benchmarked against and outperforms other visual-tactile approaches to general geometric reasoning. We also provide experimental results comparing grasping success with our method.

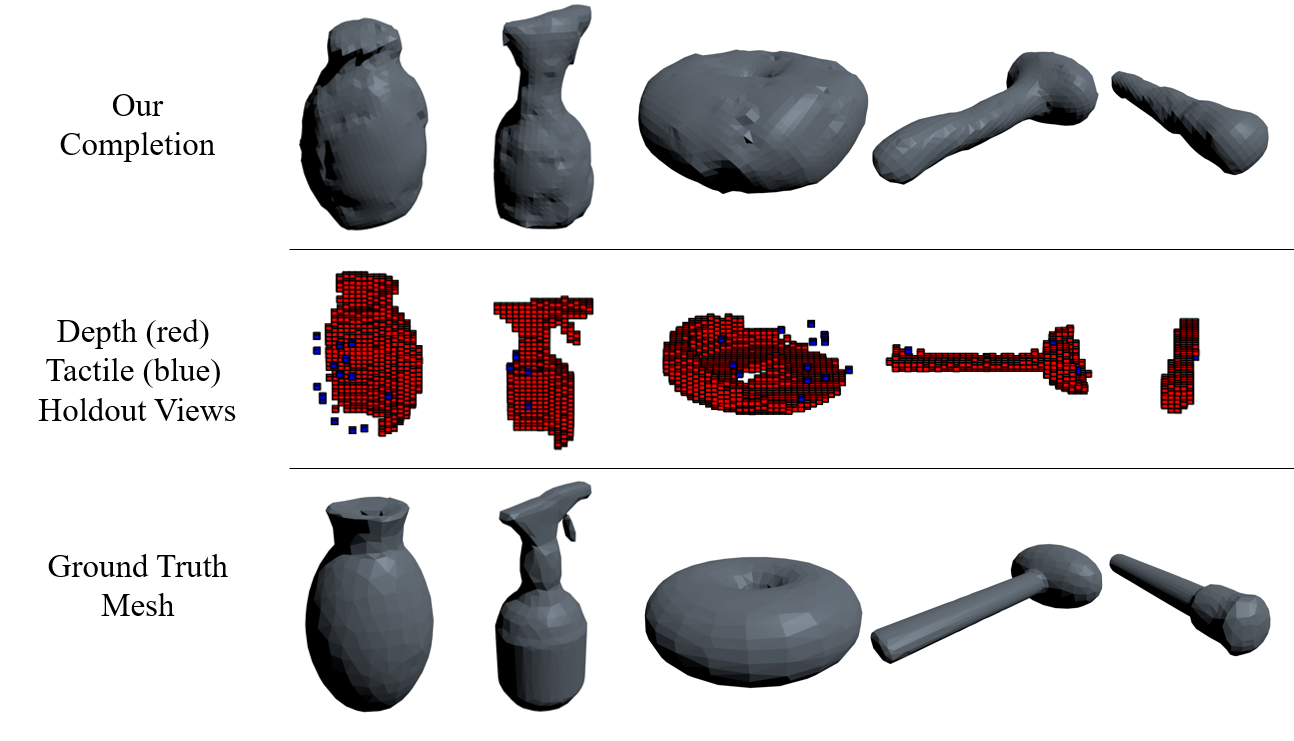

Completion Examples

We created a database of training examples and completions of those examples. See completions here.

Video

Downloads

Source code + Trained Model (Keras 2.0)

- ROS workspace with setup instructions: https://github.com/CRLab/pc_scene_completion_ws

- Trained Model: here

Training data

- 726 pointclouds for each of 608 objects, as well as the 428,340 corresponding 403 aligned x,y training example pairs from the Grasp and YCB Databases. here

Ground Truth Meshes

The ground truth meshes are not ours to distribute. To get them, please register at:

- Grasp Database: http://grasp-database.dkappler.de/

- YCB Database: http://rll.eecs.berkeley.edu/ycb/

Citation

This work was accepted to ICRA 2019. Arxiv link here